Interobserver variability for the first evaluation of the two halves of... | Download Scientific Diagram

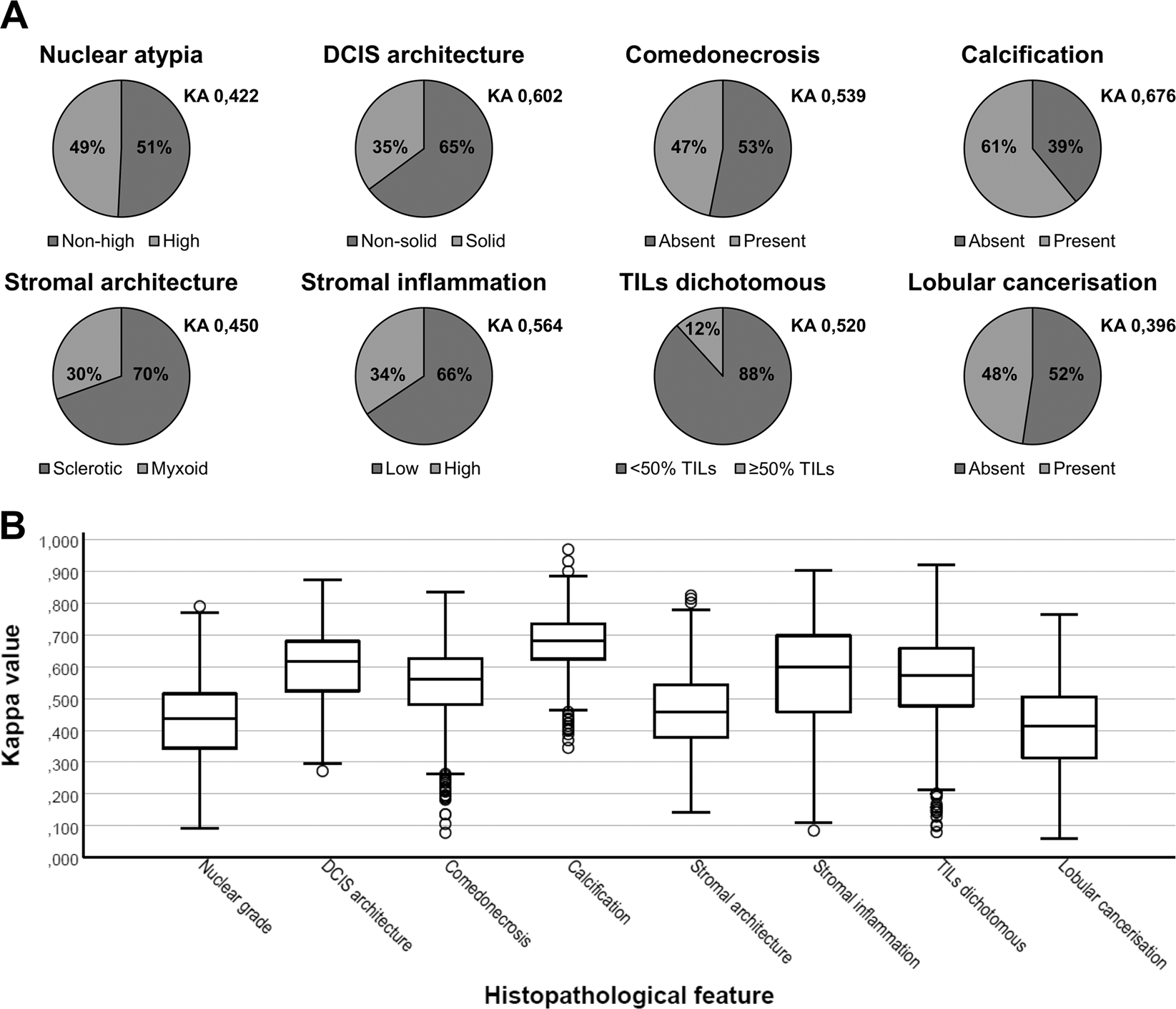

Interobserver variability in upfront dichotomous histopathological assessment of ductal carcinoma in situ of the breast: the DCISion study | Modern Pathology

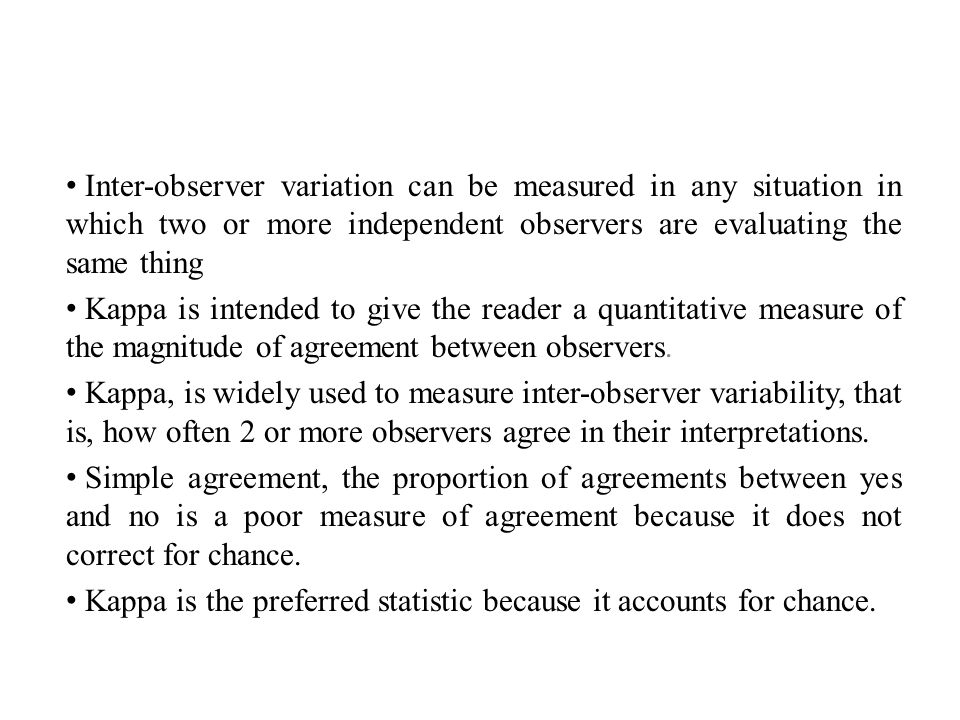

Tips for learners of evidence-based medicine: 3. Measures of observer variability (kappa statistic) | CMAJ

Accuracy of the Interpretation of Chest Radiographs for the Diagnosis of Paediatric Pneumonia | PLOS ONE

Inter-observer variability between general pathologists and a specialist in breast pathology in the diagnosis of lobular neoplasia, columnar cell lesions, atypical ductal hyperplasia and ductal carcinoma in situ of the breast –

Inter-observer agreement and reliability assessment for observational studies of clinical work - ScienceDirect

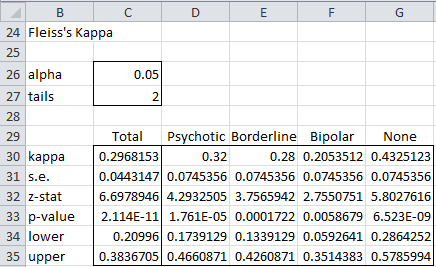

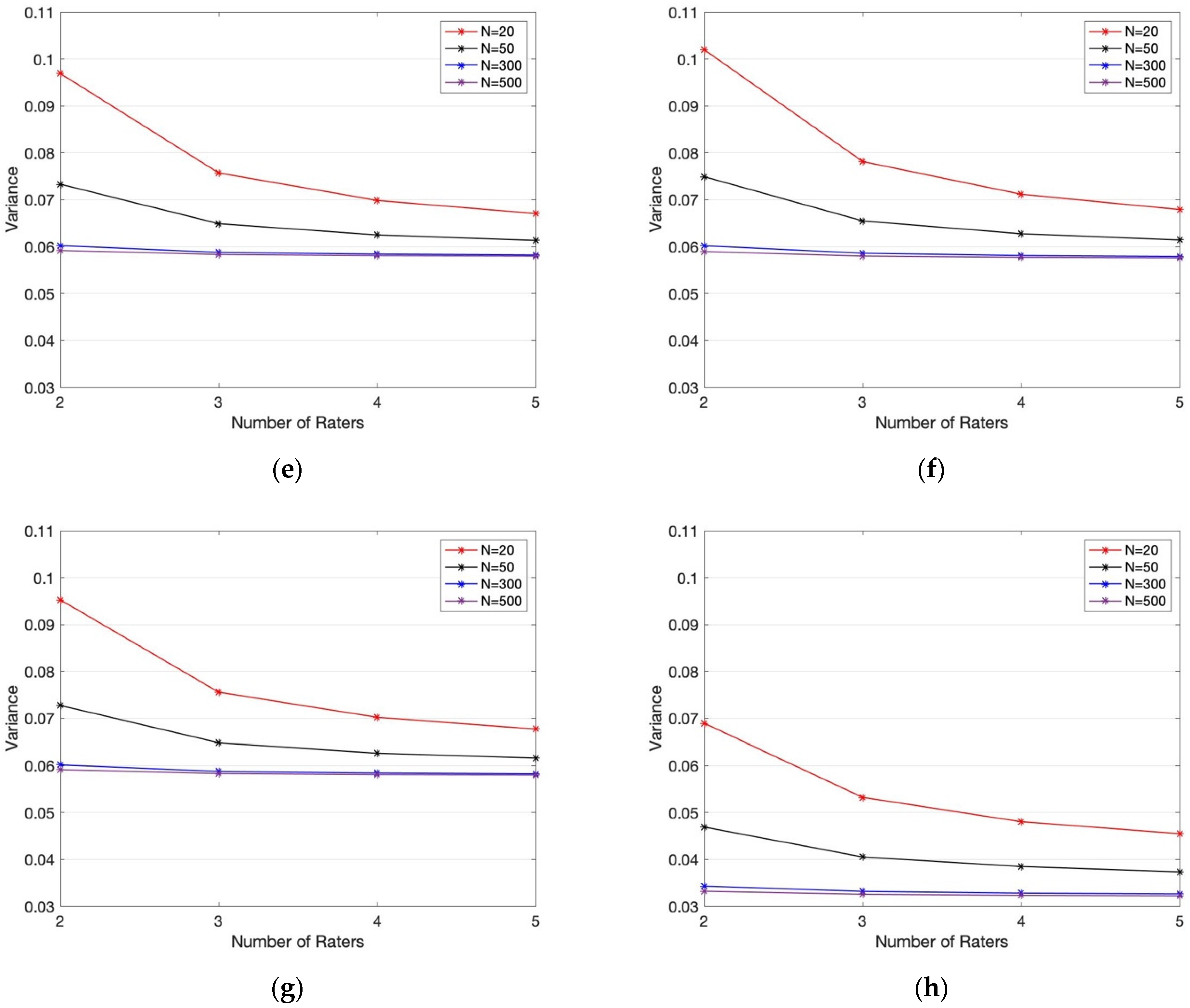

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters

Interobserver variability (Cohen's Kappa) and Intraclass correlation of... | Download Scientific Diagram

Inter-observer variability (kappa) for the different Clinical Pulmonary... | Download Scientific Diagram

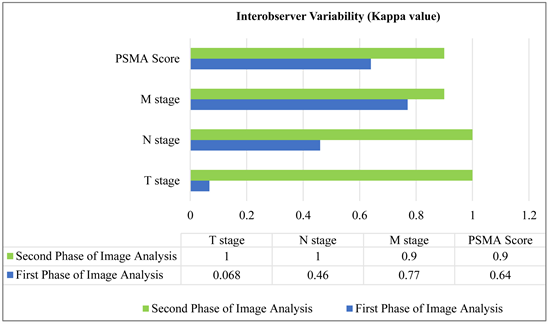

Inter-Observer Variability in the Interpretation of 68Ga-PSMA PET-CT Scan according to PROMISE Criteria

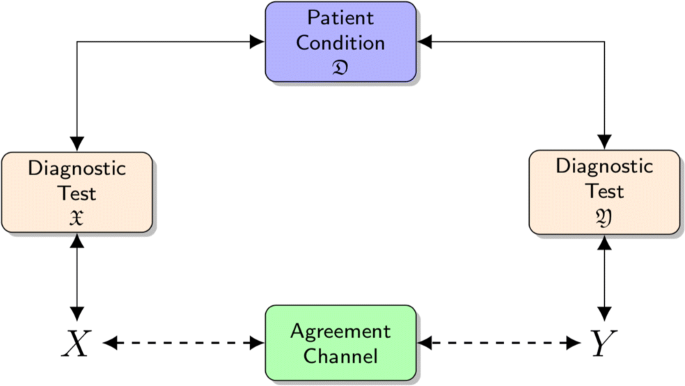

Beyond kappa: an informational index for diagnostic agreement in dichotomous and multivalue ordered-categorical ratings | SpringerLink

Interobserver variability in the interpretation of computed tomography following aneurysmal subarachnoid hemorrhage in: Journal of Neurosurgery Volume 115 Issue 6 (2011) Journals

Interobserver variability impairs radiologic grading of primary graft dysfunction after lung transplantation - ScienceDirect