Inter-Annotator Agreement (IAA). Pair-wise Cohen kappa and group Fleiss'… | by Louis de Bruijn | Towards Data Science

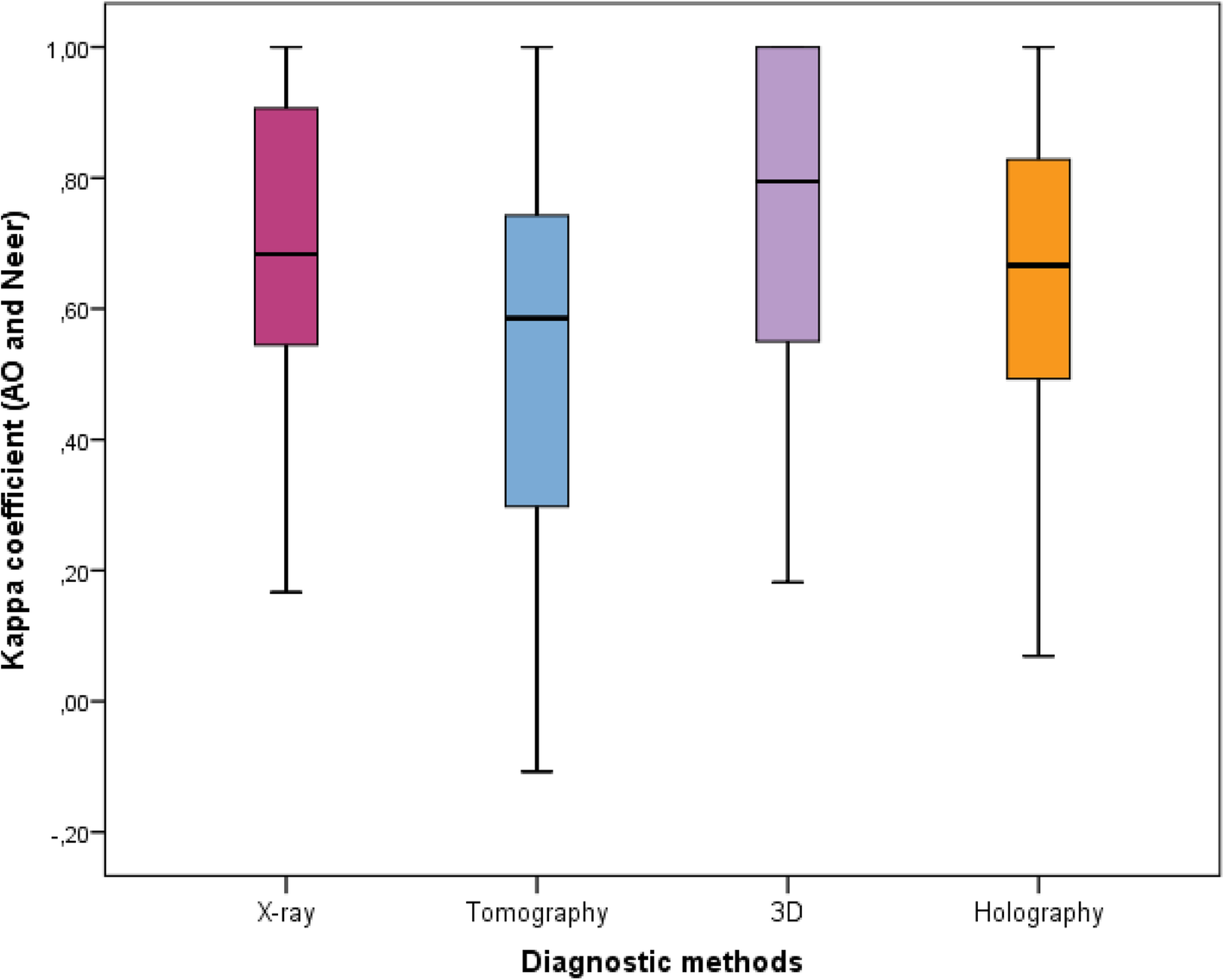

Inter-observer reliability of alternative diagnostic methods for proximal humerus fractures: a comparison between attending surgeons and orthopedic residents in training | Patient Safety in Surgery | Full Text

Intraobserver Reliability on Classifying Bursitis on Shoulder Ultrasound - Tyler M. Grey, Euan Stubbs, Naveen Parasu, 2023

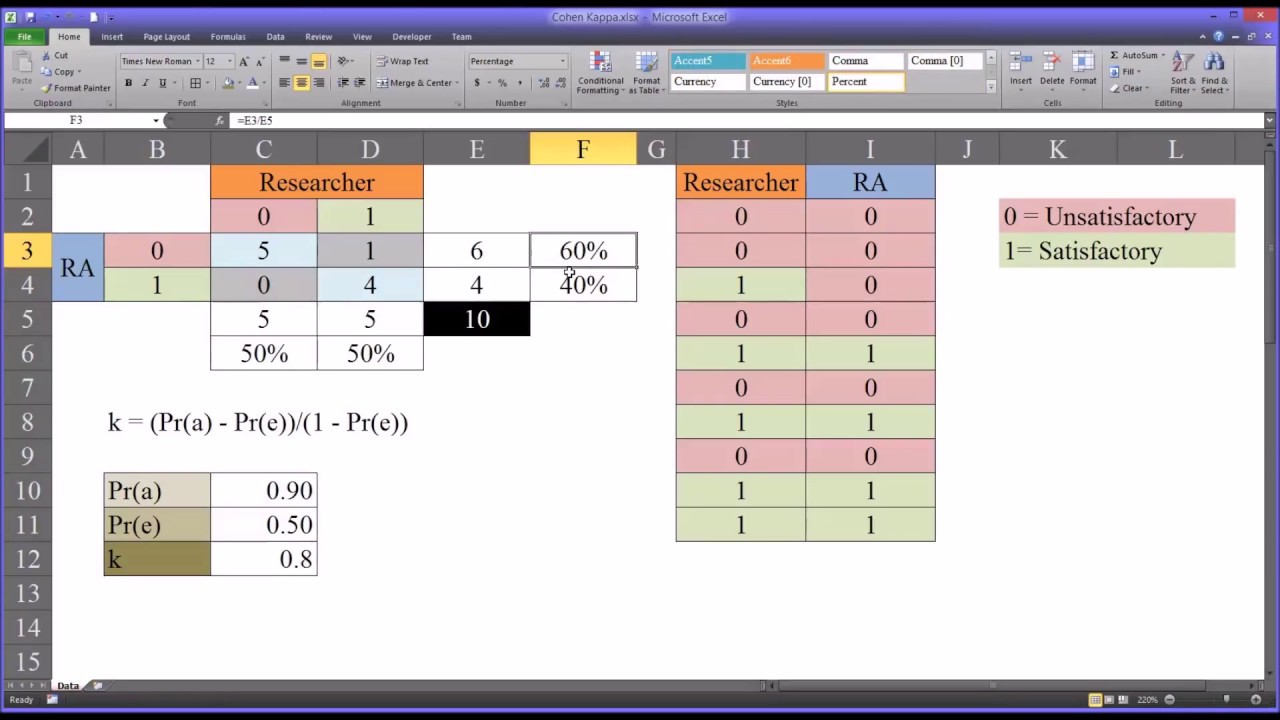

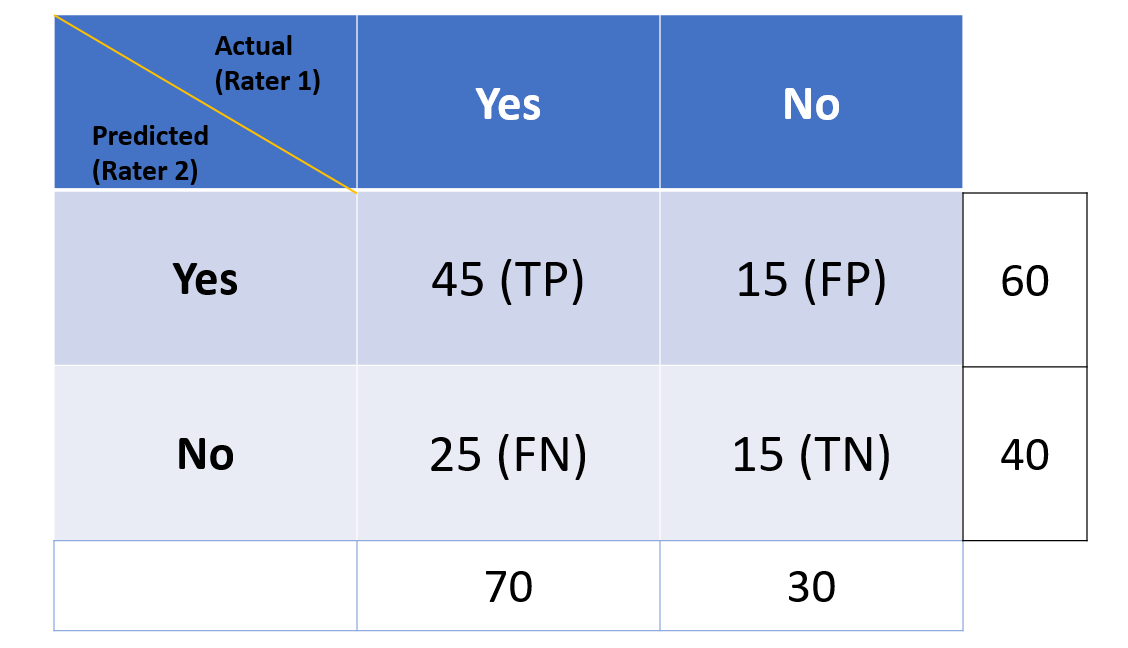

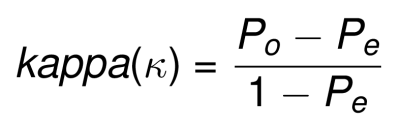

Understanding the calculation of the kappa statistic: A measure of inter- observer reliability | Semantic Scholar

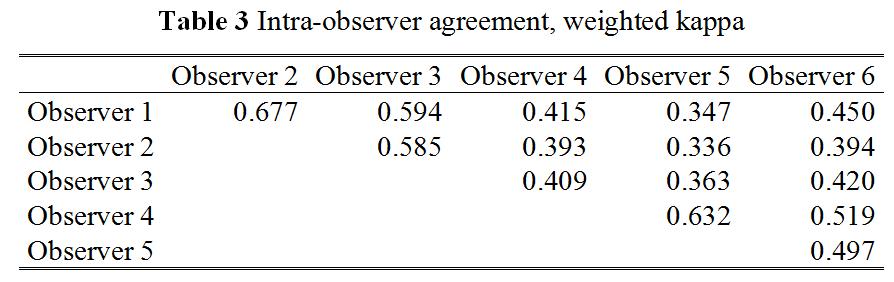

Inter-and intra-observer variability for the assessment of coronary artery tree description and lesion EvaluaTion (CatLet©) angiographic scoring system in patients with acute myocardial infarction | Chinese Medical Journal

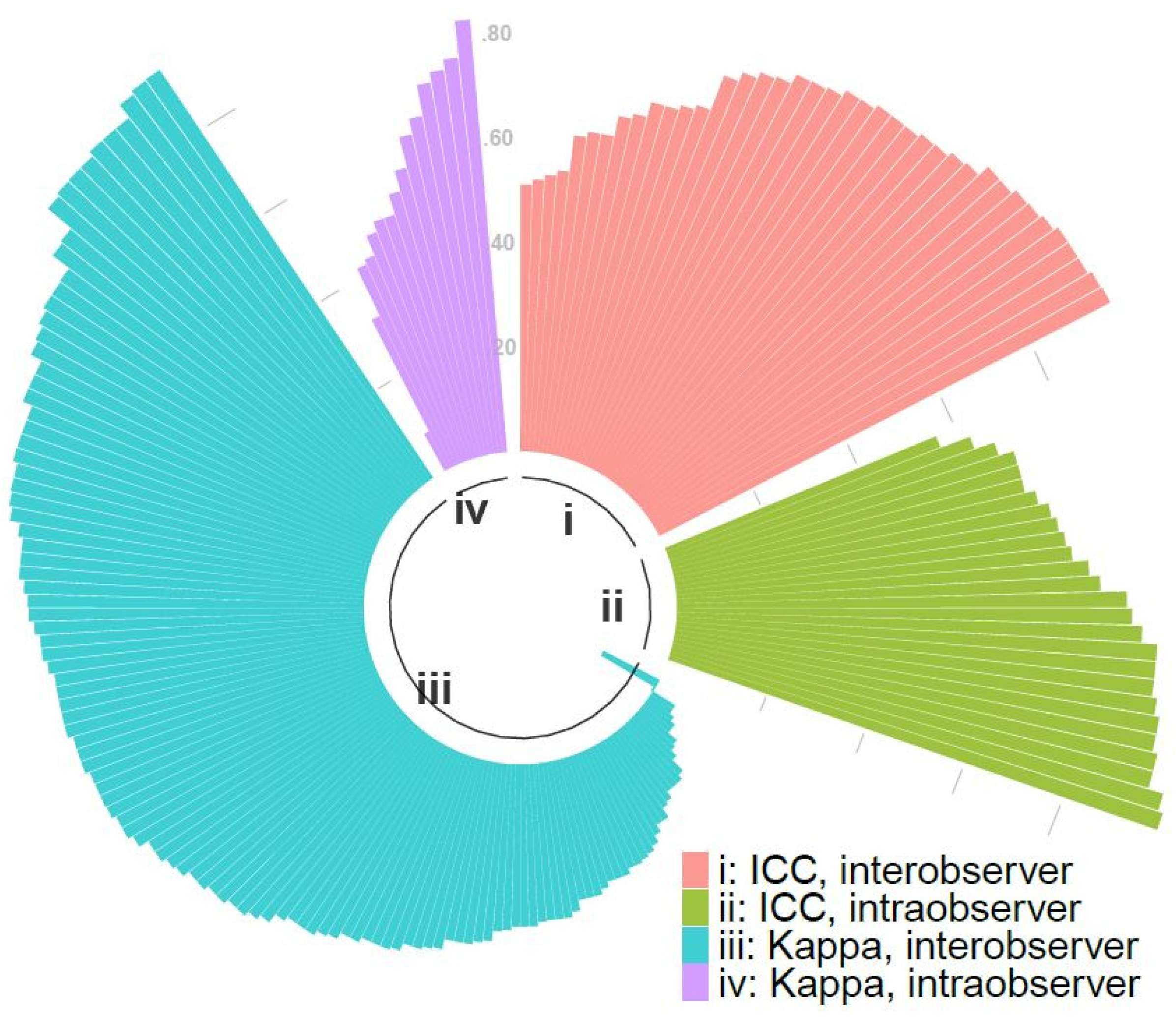

Diagnostics | Free Full-Text | Inter/Intra-Observer Agreement in Video-Capsule Endoscopy: Are We Getting It All Wrong? A Systematic Review and Meta-Analysis

Inter- and Intra-observer Variability in Biopsy of Bone and Soft Tissue Sarcomas | Anticancer Research